Announcing Project Kinesis: A Research Project advancing Intent‑driven Human-machine Interaction

Today, we’re excited to unveil Project Kinesis — Study of the language of human movement. A research initiative to understand how muscle signals can help us build more natural, intuitive, and responsive ways to interact with technology. Earlier this month, we conducted a pilot study using surface electromyography (sEMG), marking the first step in our long-term mission to build the world’s most comprehensive dataset of human movements. Our goal is to move beyond simple motion tracking and detect human intent at its source. Kinesis: Gesture is a comprehensive library of hand gestures—including forearm flexion, wrist movements, and finger-level gestures.

The Challenge of Seamless Interaction

We believe technology should extend our capabilities, not demand our attention. Today, we navigate the digital world by tapping on glass or clicking buttons — actions that pull us away from the world around us. To build the next computing platform, we need to move past physical touch and understand the subtle physiological cues that precede it.

Why Muscle Signals matter: A window into thoughtful movement

Surface electromyography (sEMG) offers one of the most direct pathways to achieving this. By measuring electrical signals generated by muscle activation, sEMG enables real-time interpretation of a person’s intended movement with millisecond precision. The signal is silent, fast, and expressive, ideal for interaction in constrained, mobile, or high-noise environments.

Our approach to sEMG moves beyond academic research or single-device control. It lays the foundation for a general-purpose muscle–machine interface that scales across users, contexts, and applications.

Kinesis: Gesture Pilot

To validate this approach, we conducted a structured pilot study involving 25 participants using Anthriq’s proprietary sEMG armband, Impulse. The armband features an 8-channel electrode array ergonomically positioned to record muscle activations from the forearm with high precision. Video recordings were simultaneously captured and time-synced with sEMG data to enable cross-referencing and annotation accuracy. Participants performed a curated set of 20 distinct hand, wrist, and finger gestures, each repeated twice to capture intra-subject variability.

The study was designed to establish proof-of-principle — that clean, structured, and repeatable muscle activation data could be collected reliably across a set of gestures and prepared for large-scale model training. Real-time annotations were time-stamped and synchronized with both video and EMG signals, ensuring the dataset is suitable for downstream model training, signal analysis, and algorithmic benchmarking.

Study Parameters Summary

- Participants: 25 healthy adults (Age: 18–80 years)

- Device: Anthriq Impulse sEMG Armband (8 channels)

- Sampling Rate: 960 Hz (sEMG), 30 FPS (Video)

- Number of Gestures: 20 predefined hand/wrist/finger gestures

- Repetitions: 2 repetitions per gesture per participant

- Environment: Controlled lab setting, non-metal furniture, stable lighting

- Calibration: 2–5 min warm‑up for baseline normalization

- Data Collected: Raw sEMG, preprocessed sEMG, synchronized video, annotations

- Metadata: Project Details, Setup & Device Details, Comprehensive Participant Details (Demographics, Behavioural, Socio-economic etc)

- Format: Cross-referenced video + EMG, both time-aligned

- Privacy Measures: IEC Approved, Arm-only video (no identifiable features), full de‑identification

All recordings underwent pre- and post-session QA checks to validate signal quality. Sample data includes clean waveform plots and time-aligned gesture video frames, providing a reference for training, benchmarking, and reproducibility.

Outcome of the Study

The results confirm the viability of structured sEMG data collection at scale, both in terms of signal quality and downstream machine learning performance.

Metrics Overview:

- Baseline stability evaluation: Baseline is stable between subjects and within channels. Here are the baseline RMS drift analysis to show the same

- 70-80% channels maintain excellent baseline adherence of within ±15% optimal range, despite heavy arm movement

- Real-time quality control successfully identifies outliers

- Predictable behavior - consistent patterns over time

- Outlier identification enables targeted calibration and optimization

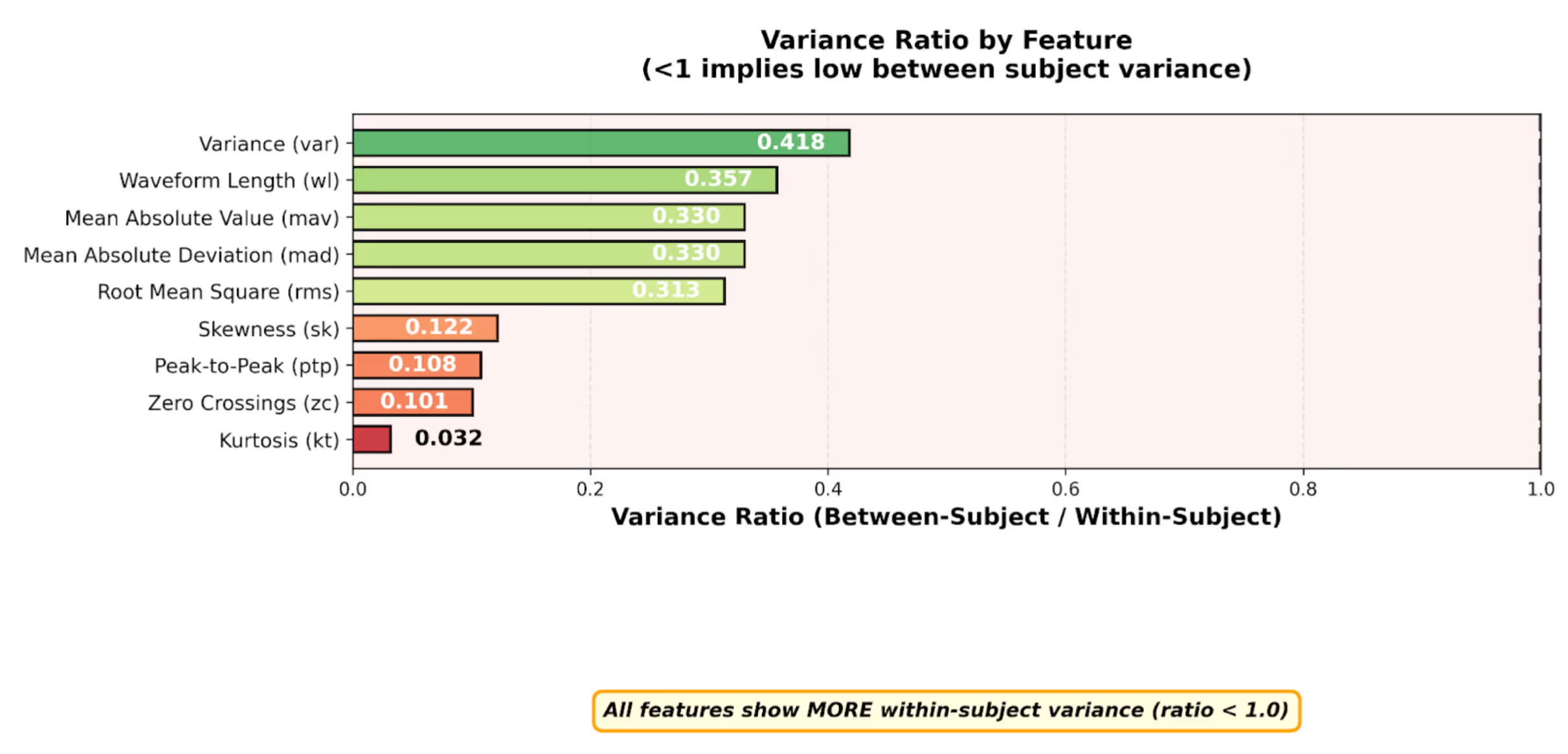

- Intra and inter-subject variability measurements: Signal consistency is determined by measuring variance within each subject and between different subjects. We expect low variation between subjects owing to our robust device and data collection protocols. However, within each subject, we expect variation as the subjects are performing different gestures; each gesture will give rise to its unique signal patterns and thereby contribute to variability. We use 9 statistical features to measure variability. All of them are below 1, implying that subject-to-subject variability is low.

- Ratio > 1: High variability between subjects

- Ratio < 1: High variability within subjects

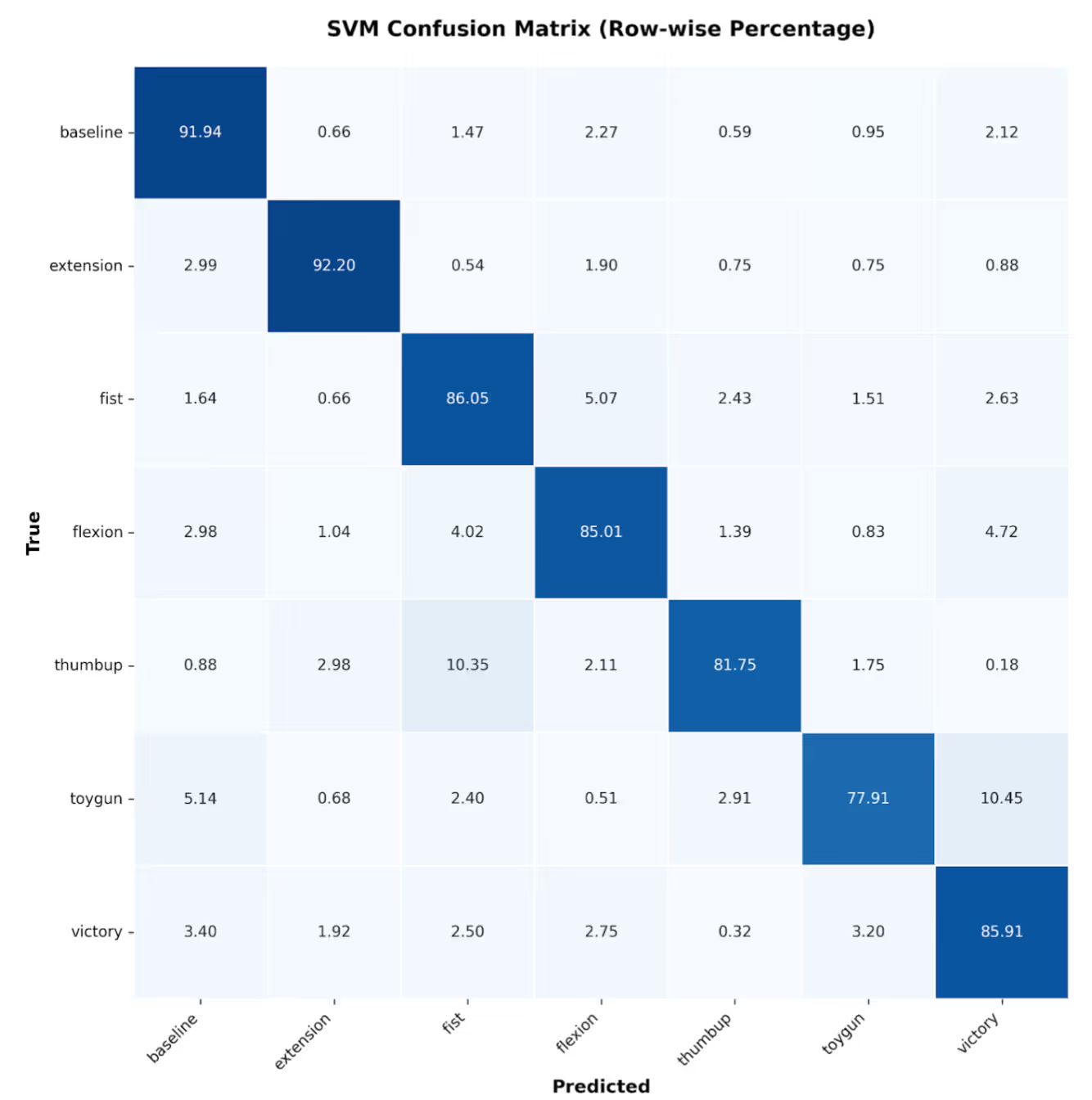

- ML Classification of gestures: The acquired dataset exhibited high fidelity, strong inter-subject consistency, and favorable signal-to-noise ratio. This facilitated a robust 7-class classification achieving high accuracy across 7 participants.

- Baseline: 91.94% correctly classified

- Extension: 92.20% correctly classified (best performance)

- Fist: 86.05% correctly classified

- Flexion: 85.01% correctly classified

- Victory: 85.91% correctly classified

- Thumbup: 81.75% correctly classified

- Toygun: 77.91% correctly classified (lowest performance)

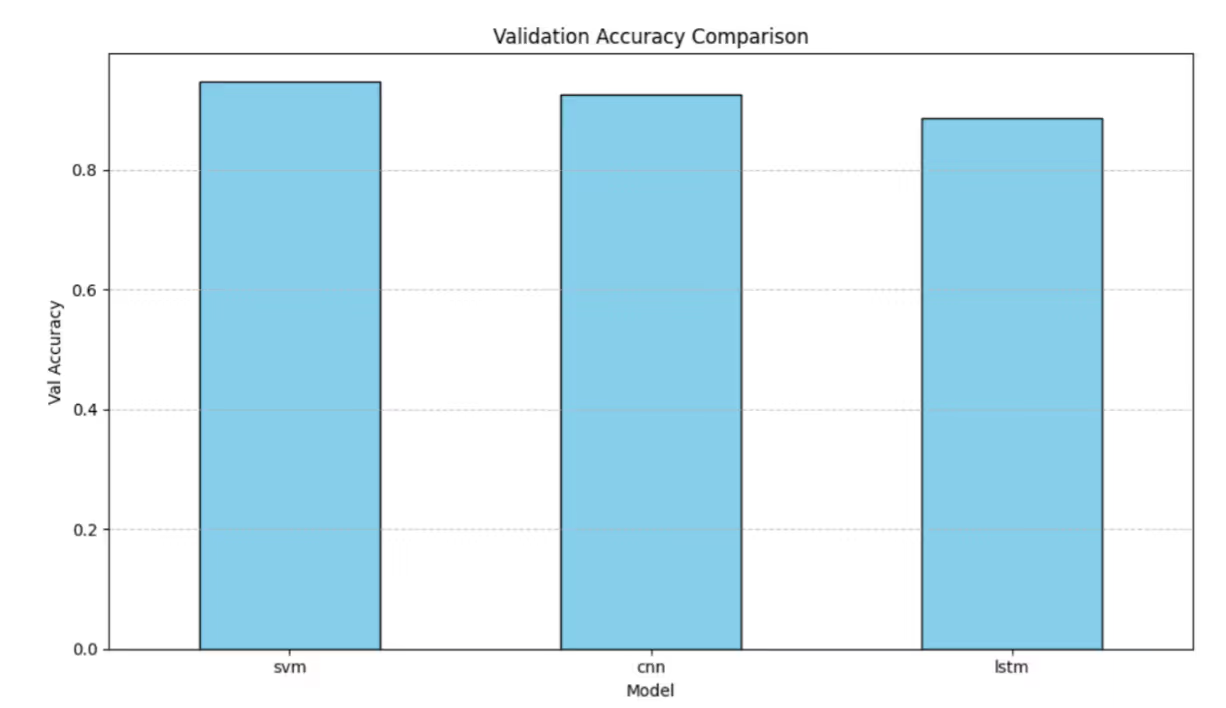

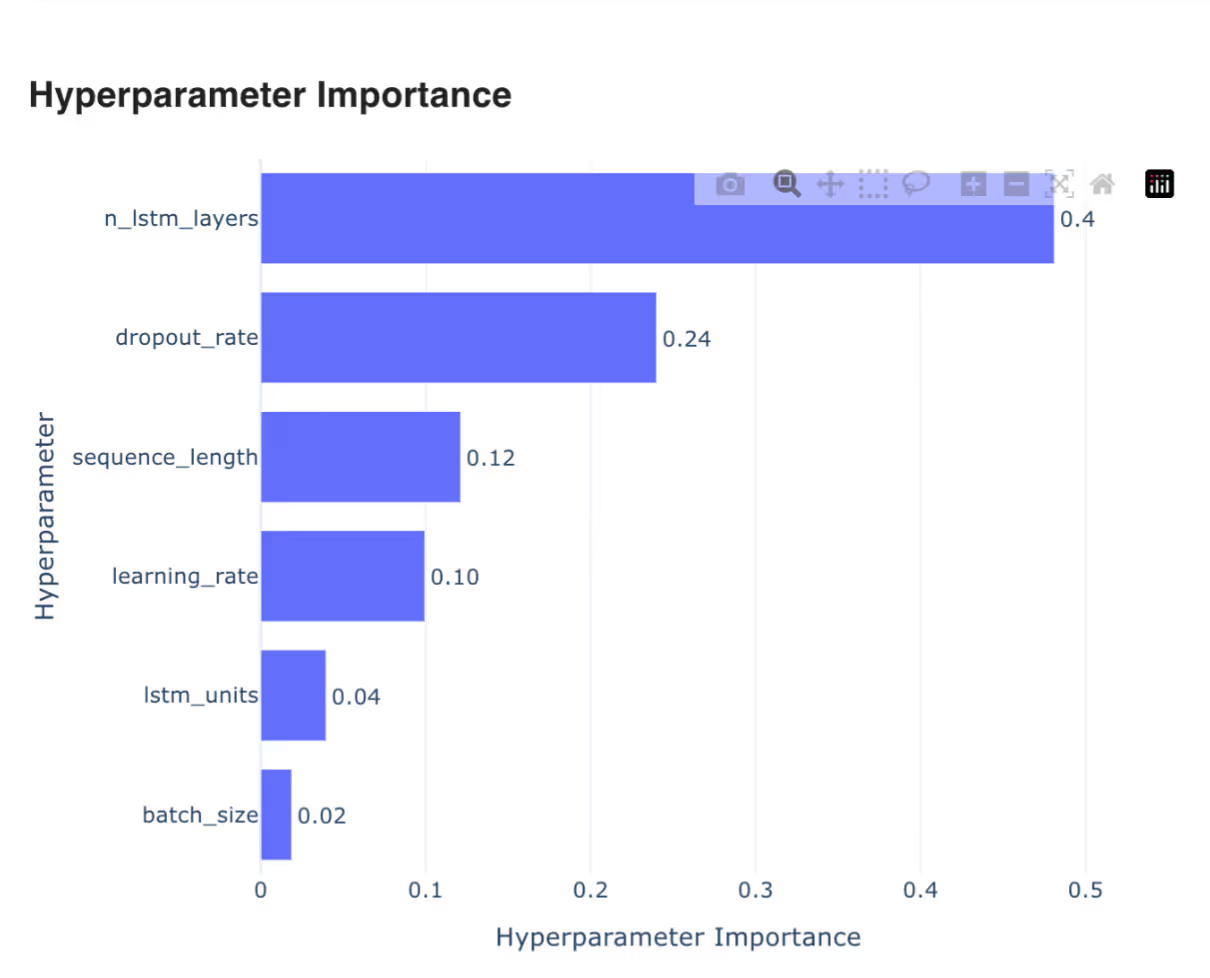

- Validation accuracy of gestures via different ML models: The dataset quality proved sufficient for comprehensive hyperparameter optimization to enhance model performance. Presented below are hyperparameter tuning outcomes for LSTM and SVM architectures.

Sample Data:

Conclusion:

Across participants and gestures, the dataset showed consistent, high‑quality patterns:

- High signal fidelity: sEMG recordings showed minimal baseline drift, low artifact noise, and clean waveform morphology across all 8 channels.

- Gesture separability: Distinct muscle activation patterns were consistently observed across all 20 gestures, with strong intra-subject consistency and inter-subject repeatability.

- Annotation precision: Real-time labels were time-synced with video and EMG data, enabling high-quality alignment for downstream tasks.

- Model validation: Preliminary ML models trained on the dataset achieved strong classification accuracy, confirming signal viability for gesture recognition.

Pipeline scalability:

The study validates our full-stack data pipeline — including hardware, capture protocol, annotation tooling, and artifact rejection — as ready for broader deployments across clinical, industrial, and consumer applications.

Applications across Industries: where this data accelerates innovation

- Next-Generation Interfaces: Enables low-latency, high-accuracy control for spatial computing devices, AR/VR headsets, and ambient computing systems.

- Healthcare & Rehabilitation: Supports motor recovery tracking, fatigue assessment, and real-time neuromuscular monitoring.

- Neuroprosthetics & Assistive Tech: Enables adaptive, personalized control models for prosthetic limbs and assistive communication devices.

- Industrial Robotics & Exoskeletons: Integrates operator intent into collaborative systems for safer, more intuitive task execution.

- Automotive & Ambient Interfaces: Enables silent, muscle-based gesture control in high-noise or hands-busy environments.

Each of these domains requires high-fidelity data that reflects real-world muscle behavior, precisely what this dataset was built to provide.

Future Vision

The Kinesis: Gesture pilot is the first step toward building the world's most comprehensive cross-modal library of gestures. The broader Kinesis initiative will encompass additional projects focused on various types of muscle movements, including walking and running patterns, posture control, smooth muscle activation, and more. Our vision extends beyond raw biosignals. We're building curated datasets, pre-trained models, and deployment-ready pipelines that empower partners to accelerate their products and research.

We look forward to engaging with research institutions, product innovators, and platform builders who share the vision and help us build a future where understanding human intent begins at the signal itself.

Note: All data collection was conducted with informed consent and managed under strict privacy and ethical standards. Participant identities are anonymized, and future dataset releases will align with global data protection frameworks.

- Research Team, Anthriq

Further reading

Subscribe to Neurotech Pulse

A roundup of the latest in neurotech covering breakthroughs, products, trials, funding, approvals, and industry trends straight to your inbox.